The AI World Wasn’t Expecting DeepSeek

In a field dominated by giant names—OpenAI, Google DeepMind, Meta—you’d think only billion-dollar models could shake up the landscape. But in late 2023, a new player stepped into the arena and turned heads not by being massive, but by being clever.

Enter DeepSeek—an AI model born from a relatively small mathematical change that somehow unlocked big performance gains. No expensive tricks. No massive hardware demands. Just smart, lean innovation.

So what’s the deal? Why are researchers, companies, and even governments talking about this like it’s the next big thing? Let’s break it down.

What Is DeepSeek?

DeepSeek is a family of language models developed by a team of researchers in China. At first glance, it looked like just another AI model trying to compete with the likes of GPT-4. But when they published their research, something interesting happened: the community actually read it—and got excited.

Because hidden in the paper was a simple yet brilliant mathematical improvement. It wasn’t flashy. It didn’t involve thousands of GPUs. But it worked.

Their tweak made the model more efficient—meaning it could perform just as well (or better) than larger models, while using less power and fewer resources.

In a world obsessed with scaling up, DeepSeek made a compelling case for scaling smart.

The Simple Math That Sparked the Buzz

Let’s be real: most AI papers are packed with jargon. But DeepSeek’s insight was surprisingly digestible. They optimized parts of the attention mechanism—the same core idea that made Transformers revolutionary in the first place.

Instead of overcomplicating things, they made targeted improvements to how the model processed and weighed information. Think of it like adjusting the tuning of an engine so it burns fuel more efficiently—same car, better mileage.

This adjustment led to a model that was faster to train, cheaper to run, and still high-performing. And just like that, DeepSeek wasn’t just “another model”—it was a serious alternative.

Why This Matters (Technically and Geopolitically)

DeepSeek is more than a technical paper. It’s a signal.

From a technical perspective, it showed that we’re not yet done discovering efficiencies in large language models. In fact, we may just be scratching the surface. While Big Tech races to build ever-larger models, DeepSeek suggests that there’s untapped potential in smarter, more elegant design.

But there’s a geopolitical layer too.

DeepSeek comes out of China—a country rapidly investing in foundational AI models and infrastructure. For years, Western companies dominated the AI landscape. But now, Chinese research teams are showing they can not only catch up—they can innovate.

And that has big implications. As nations compete for AI leadership, models like DeepSeek become part of a larger narrative: Who controls the future of intelligence? Who sets the standards? Who shapes the ethics?

More with Less: A New Era of Efficient AI?

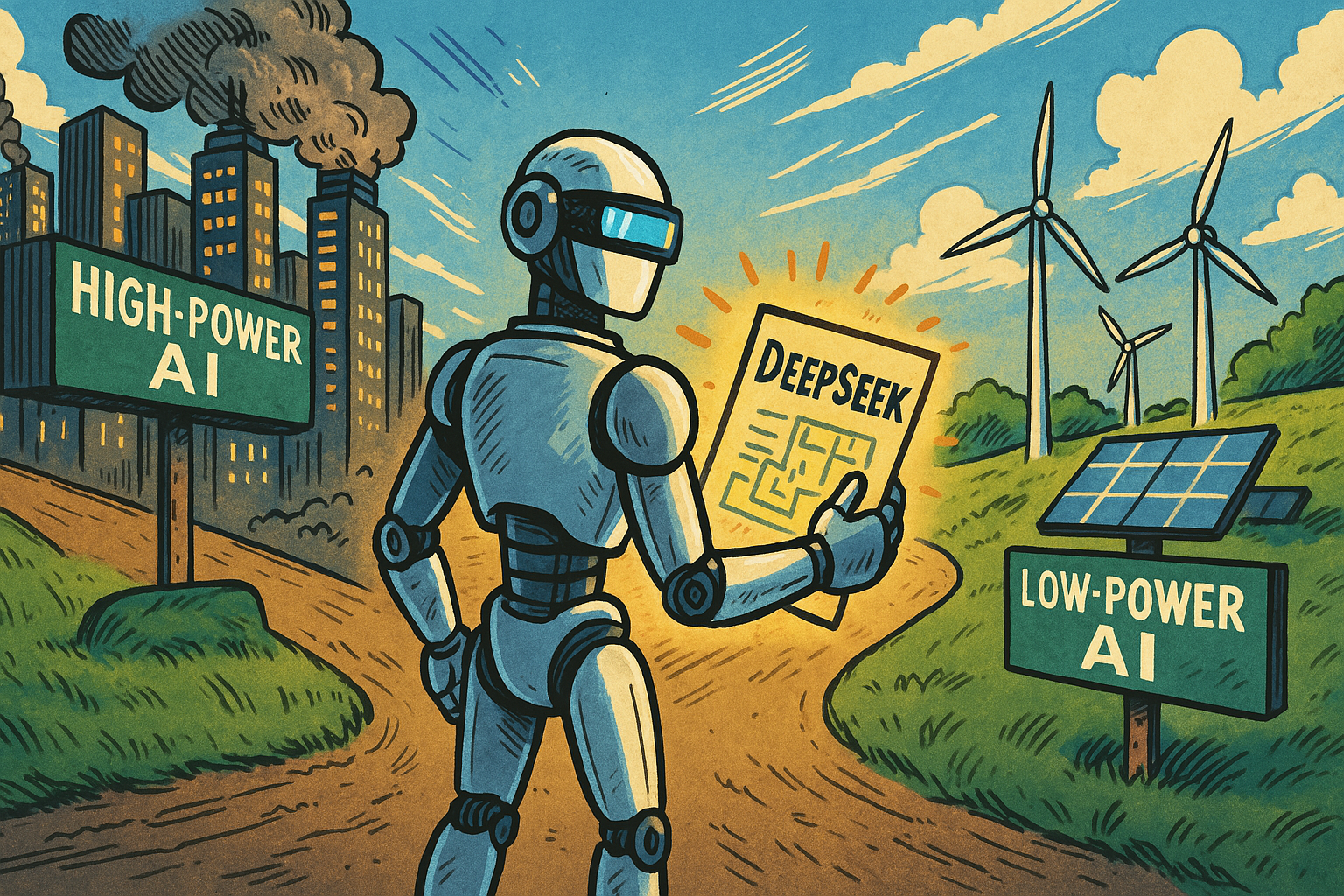

DeepSeek has also reignited a conversation that had been simmering beneath the surface: Does AI really need to be this big?

Most state-of-the-art models require massive computational power, which means more energy consumption, more data, and more hardware. That’s not exactly sustainable—or accessible.

But with models like DeepSeek, the idea of low-power, high-efficiency AI is becoming real. Imagine models that could run on smaller servers, on laptops, even on your smartphone—without sacrificing intelligence.

This isn’t just convenient—it’s transformative.

The Edge Is Coming

Here’s where it gets really exciting.

As models become more efficient, we edge closer (pun intended) to a world where AI runs locally on your devices. That’s called Edge AI—and it’s the next frontier.

No more relying on cloud servers for everything. No more latency. No more data privacy nightmares. Just powerful, personal AI at your fingertips.

DeepSeek didn’t create this vision—but it brought us a lot closer to making it real. It proved that performance doesn’t have to come at the cost of accessibility. And that opens the door to a more distributed, more democratic AI future.

Final Thoughts: Why DeepSeek Deserves Your Attention

In the end, DeepSeek might not be the flashiest model. But that’s exactly why it’s so important.

It’s a reminder that innovation isn’t always about size—it’s about smarts. That the next leap forward might come not from a billion-dollar lab, but from a few lines of clever math. That the future of AI isn’t just about what we build, but how we build it.

And if that future is smarter, smaller, and more energy-conscious?

Then maybe DeepSeek didn’t just tweak a model. Maybe it helped tweak the course of AI itself.

Read the DeepSeek research paper here (for the curious minds):

Leave a Reply