Research

-

DeepSeek: The Quiet Math Tweak That Might Redefine the AI Future

It didn’t arrive with fireworks or fanfare. DeepSeek slipped into the scene with a simple mathematical change—and suddenly, it was the name on everyone’s lips. But what’s really behind the hype? Let’s unpack how a humble paper sparked global buzz, and what it means for the future of AI, geopolitics, and the race for smarter,…

Written by

-

Attention is All You Need – The Paper That Changed AI Forever

Before 2017, AI models were powerful but painfully slow and complicated. Then came a research paper titled “Attention is All You Need”—a game-changer that flipped everything we knew about AI on its head. Here’s how it reshaped the future in the simplest way possible.

Written by

-

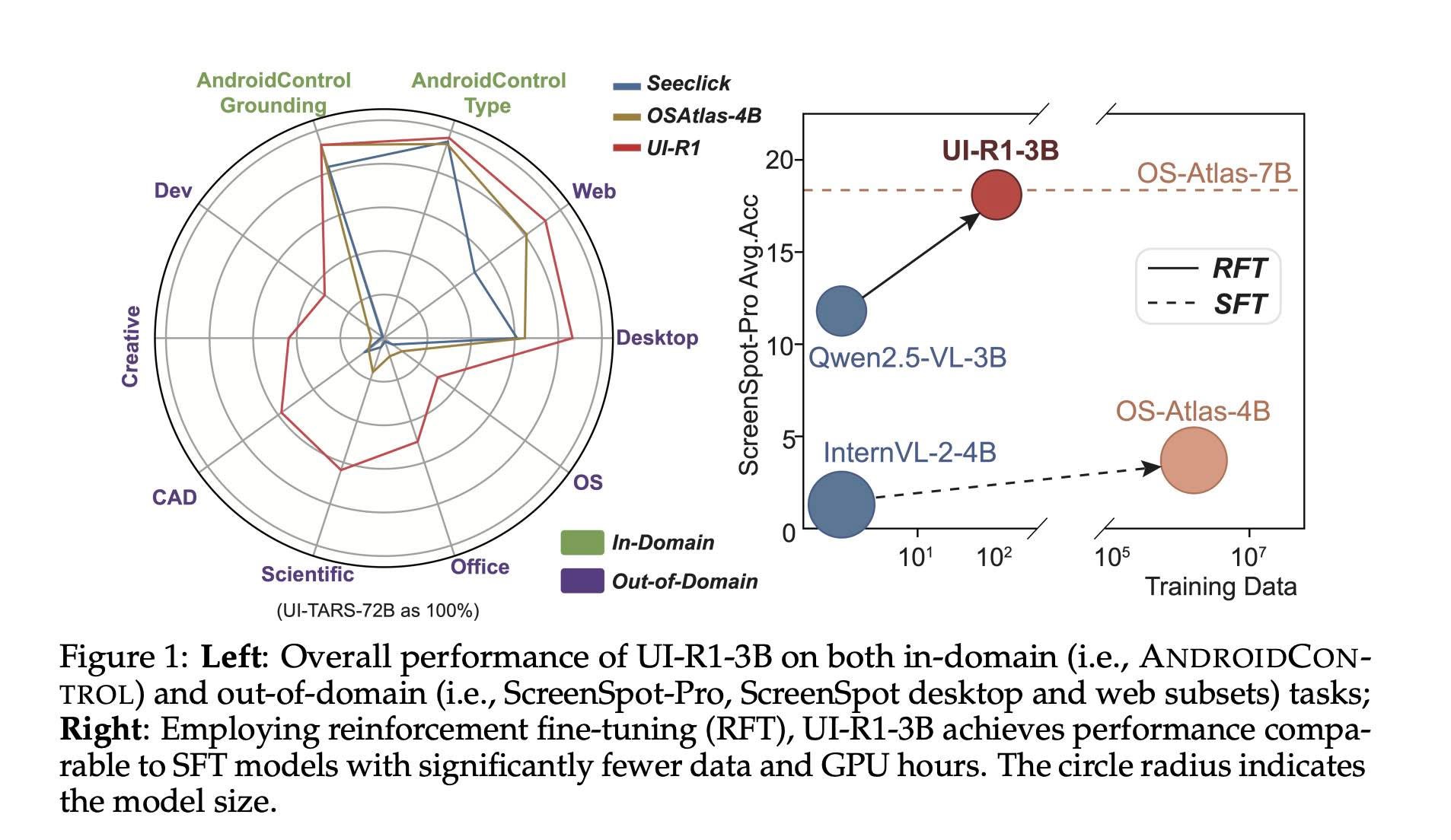

UI-R1: Teaching AI to Navigate Your Screen Like a Pro

What if your phone could predict your next tap or swipe? UI-R1, a new AI model trained with reinforcement learning, is bringing us closer to smarter, more intuitive virtual assistants. By learning from visuals and language—not just massive datasets—UI-R1 shows how AI can interact with real-world mobile apps more accurately and efficiently.

Written by

-

How Do Language Models Learn Facts? Inside the Mysterious Memory of AI

How do large language models actually learn facts? A new study by Google DeepMind and ETH Zürich uncovers a surprising three-phase process—from slow starts to sudden insights and unexpected memory loss. These findings reveal why your AI assistant sometimes nails the answer—and sometimes confidently makes things up.

Written by

-

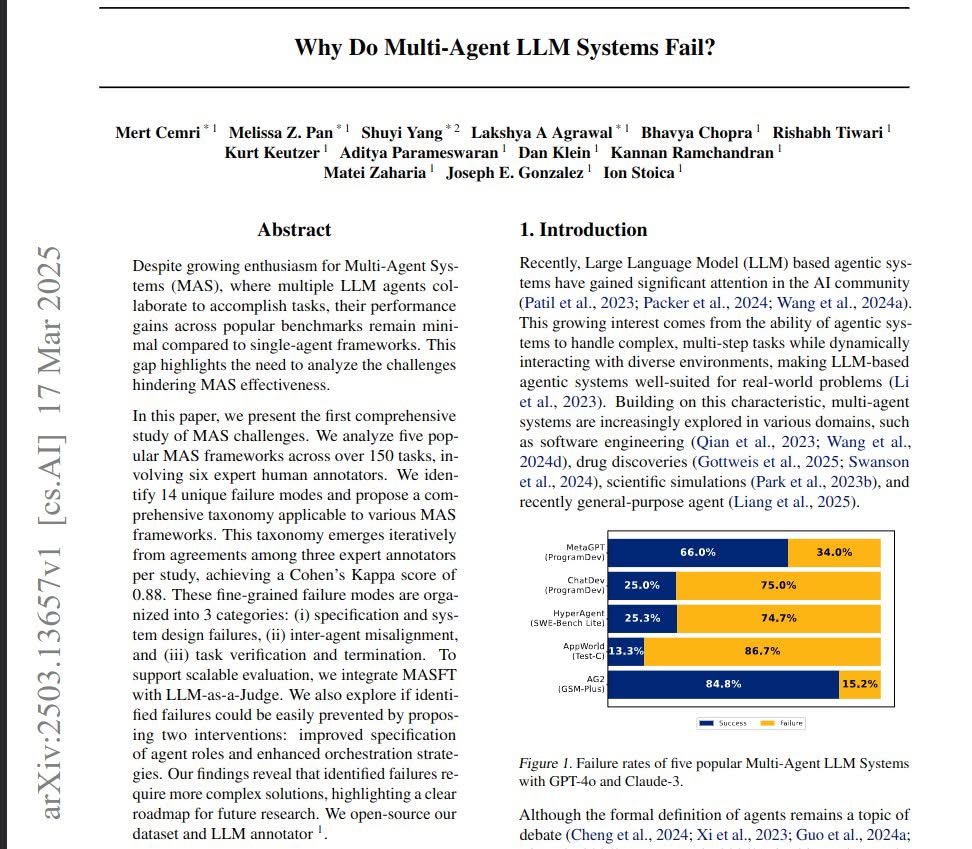

Why Do Multi-Agent AI Systems Keep Failing? A Look Into the Latest Research

Multi-Agent AI Systems promise intelligent collaboration between agents, but why do they so often underperform? A new UC Berkeley study digs deep into the causes of these failures—highlighting critical design flaws, coordination issues, and verification challenges—and offers a roadmap for building better, smarter systems.

Written by