LLM

-

From Model to Product: Foundations for Practical AI Engineering

Everyone is building with AI today. Very few are building systems that actually work in production. This article explores the real gap between models and products, and why thinking like an AI engineer matters more than ever.

Written by

-

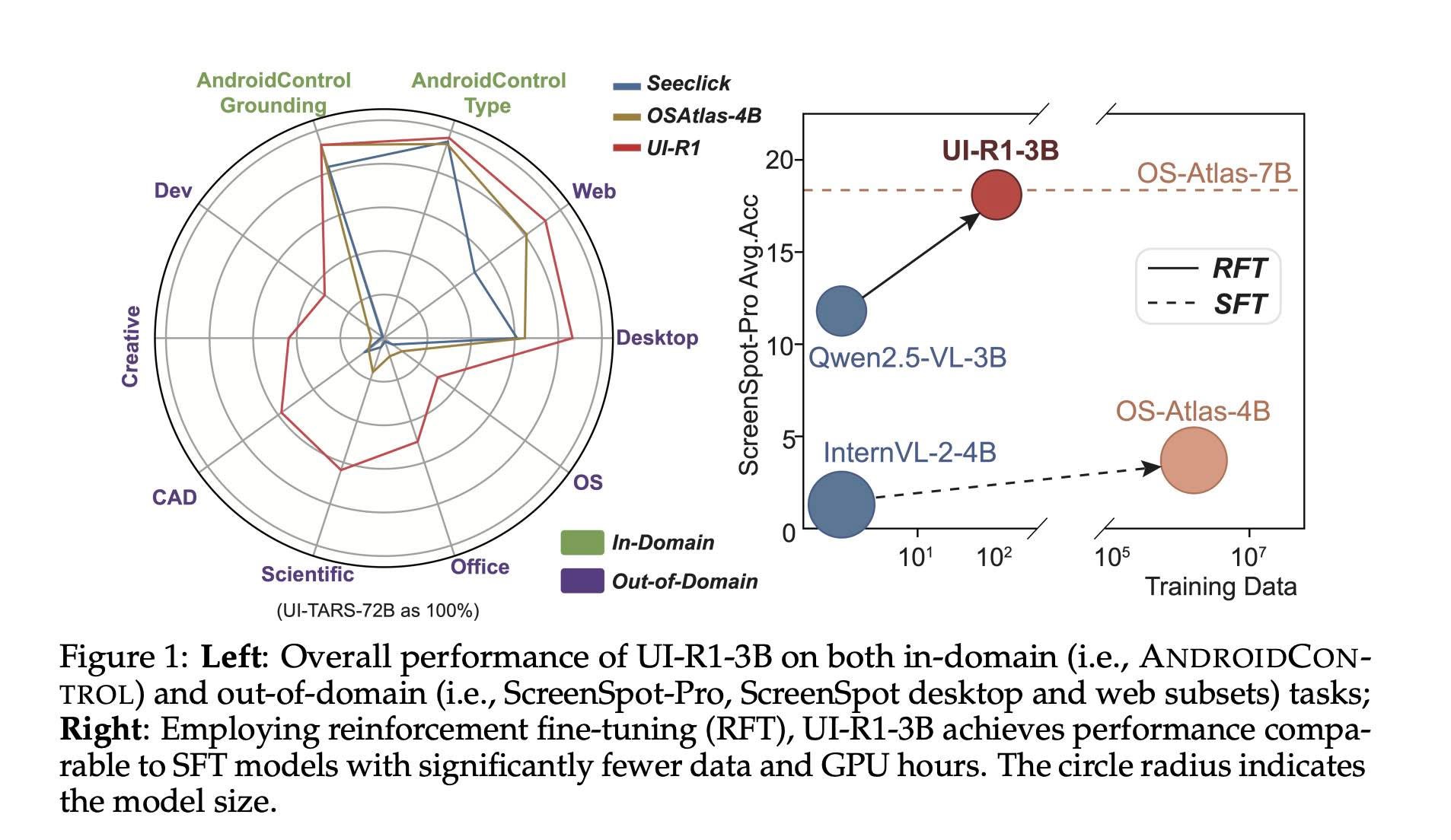

UI-R1: Teaching AI to Navigate Your Screen Like a Pro

What if your phone could predict your next tap or swipe? UI-R1, a new AI model trained with reinforcement learning, is bringing us closer to smarter, more intuitive virtual assistants. By learning from visuals and language—not just massive datasets—UI-R1 shows how AI can interact with real-world mobile apps more accurately and efficiently.

Written by

-

How Do Language Models Learn Facts? Inside the Mysterious Memory of AI

How do large language models actually learn facts? A new study by Google DeepMind and ETH Zürich uncovers a surprising three-phase process—from slow starts to sudden insights and unexpected memory loss. These findings reveal why your AI assistant sometimes nails the answer—and sometimes confidently makes things up.

Written by

-

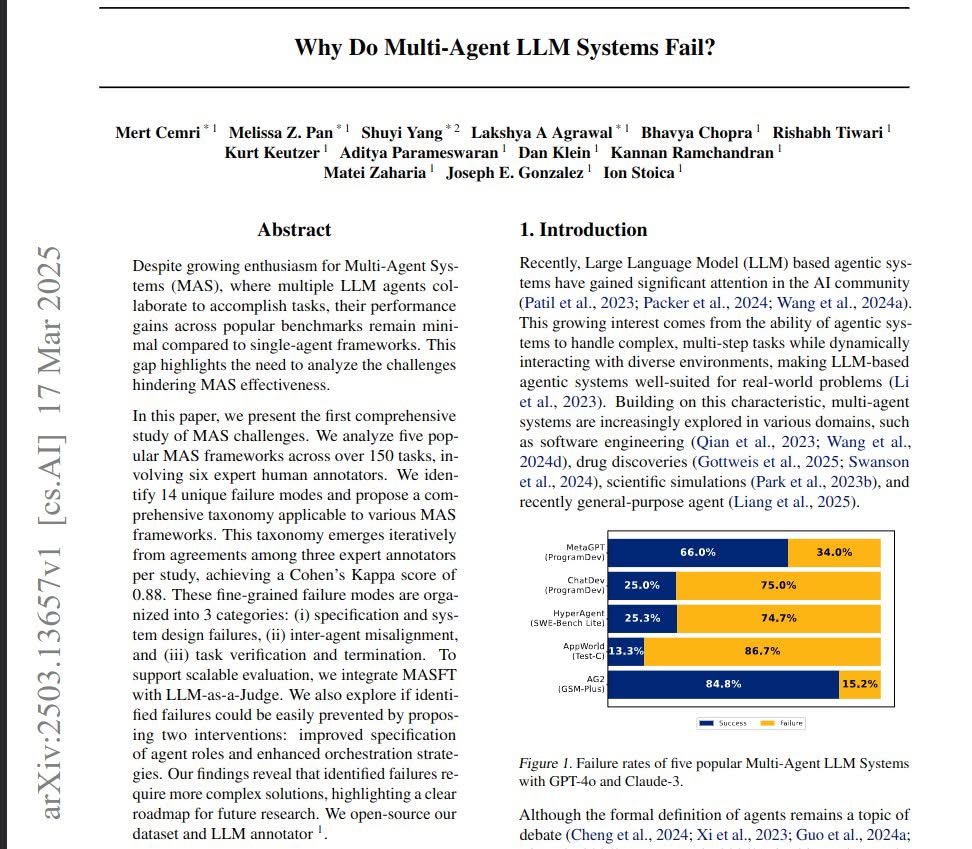

Why Do Multi-Agent AI Systems Keep Failing? A Look Into the Latest Research

Multi-Agent AI Systems promise intelligent collaboration between agents, but why do they so often underperform? A new UC Berkeley study digs deep into the causes of these failures—highlighting critical design flaws, coordination issues, and verification challenges—and offers a roadmap for building better, smarter systems.

Written by

-

Understanding Large Language Models (LLMs): The Basics of Their Math, Training, and Inference

Large Language Models (LLMs) have transformed the world of artificial intelligence, enabling machines to generate human-like text, answer questions, and even write code. But how do they actually work? This article breaks down the key concepts behind LLMs in a way that is easy to understand, with just enough math to show how things come…

Written by