The idea of AI agents teaming up to solve complex problems sounds like something out of a sci-fi dream—and for years, it’s been a hot topic in AI research. These systems, known as Multi-Agent Systems (MAS), promise a future where intelligent agents can collaborate, divide up tasks, and deliver smarter, faster results. But in practice? They often flop.

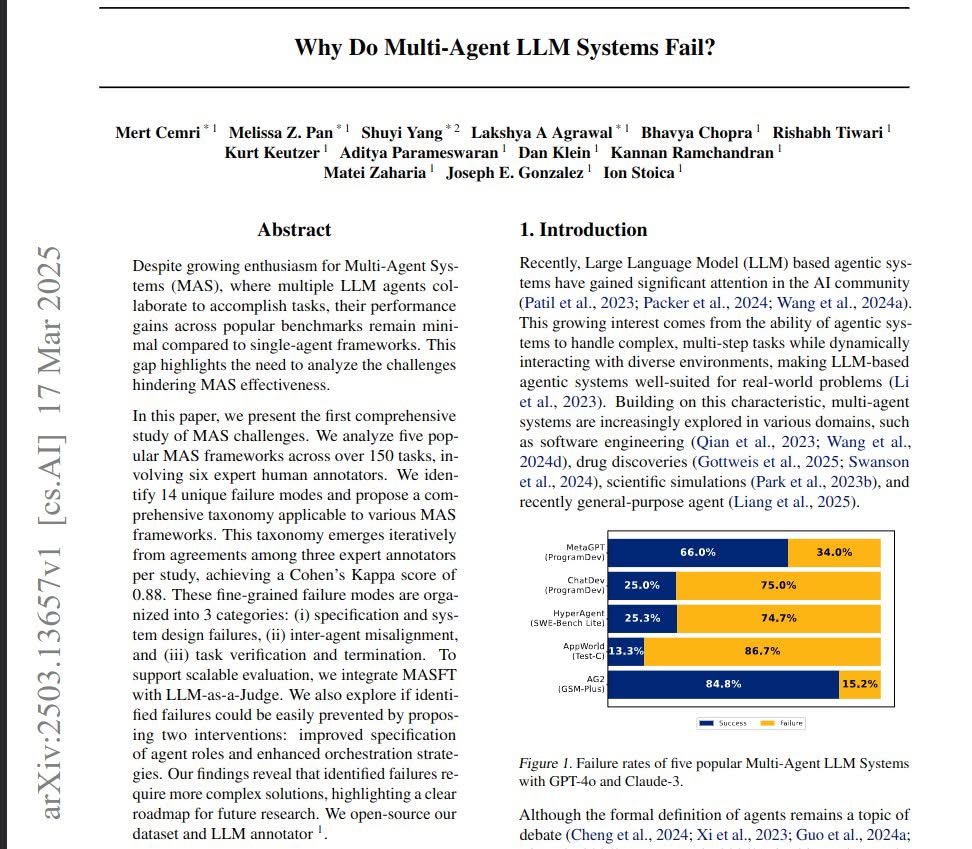

A new study out of UC Berkeley titled Why Do Multi-Agent LLM Systems Fail? takes a hard look at what’s going wrong. The research team, which includes AI heavyweights like Dan Klein, Matei Zaharia, Ion Stoica, and others, doesn’t just scratch the surface—they dig deep. Evaluating five well-known multi-agent frameworks across 150+ tasks, they ask the big question: Why aren’t these systems working as expected?

And the short answer? It’s complicated.

The Anatomy of a Breakdown

The researchers break down the failure modes into 14 distinct types, grouped into three main buckets:

1. System Design Failures

Sometimes the foundation is shaky. If the roles of the agents aren’t clearly defined, or the rules guiding their behavior are too fuzzy, everything built on top starts to crumble.

2. Misalignment Between Agents

Think of it like a group project gone wrong—too many cooks in the kitchen, stepping on each other’s toes, or pulling in different directions. The agents often fail to coordinate, leading to contradictions, duplicated efforts, or just plain confusion.

3. Trouble Knowing When They’re Done (or Right)

Even when the agents “finish” a task, there’s no clear consensus on whether it’s actually complete or correct. Without robust methods for verifying results, these systems can declare victory far too soon—or keep going long after they should’ve stopped.

Can We Just Fix the Easy Stuff?

The team didn’t stop at identifying the problems. They also ran controlled experiments to see if simple changes—like giving agents clearer roles or introducing better orchestration strategies—could help.

Spoiler: not really.

While these tweaks improved things in some cases, they didn’t address the deeper issues. These failures are more than just minor bugs or tuning problems—they reflect fundamental challenges in building truly collaborative AI.

So, What’s Next?

Instead of just diagnosing the problems and walking away, the team open-sourced their dataset and evaluation tools, hoping to empower other researchers to build on their work. It’s a significant step toward creating more reliable, scalable multi-agent systems.

Their takeaway? It’s time for a more thoughtful approach to MAS design—one that digs into the mechanics of how agents communicate, coordinate, and verify their progress. As exciting as multi-agent AI is, it won’t reach its potential unless we confront these structural flaws head-on.

So if you’ve been watching the multi-agent space and wondering why it’s been more talk than transformation, this paper is a must-read.

Read the full paper here:

Why Do Multi-Agent LLM Systems Fail?

AI collaboration is hard—but understanding why it fails is the first step toward making it work.

Leave a Reply